Digital fog of war describes a modern form of battlefield uncertainty created by artificial intelligence systems, automated analytics, and large-scale digital information flows. In traditional military theory, the “fog of war” refers to the confusion and uncertainty experienced during conflict due to incomplete or unreliable information. The concept dates back to the military strategist Carl von Clausewitz, who described how commanders must make decisions with imperfect knowledge about the enemy and battlefield conditions. :contentReference[oaicite:0]{index=0}

Today, artificial intelligence is supposed to reduce that uncertainty by analyzing massive volumes of data. However, a new challenge has emerged. AI systems sometimes generate inaccurate conclusions or fabricated information, a phenomenon known as hallucination. When these systems analyze conflicts such as tensions involving Iran or other geopolitical crises, the result can be a new kind of uncertainty—the digital fog of war.

Why AI Hallucinates During Conflict Analysis

Large language models and generative AI systems are trained to produce coherent responses based on patterns in massive datasets. When they lack reliable information, they may generate plausible but incorrect outputs instead of admitting uncertainty. This phenomenon occurs because many AI models are optimized to provide answers rather than say “I don’t know.” :contentReference[oaicite:1]{index=1}

During fast-moving geopolitical events, reliable data may be scarce or contradictory. AI systems pulling from fragmented sources may produce confident narratives that are not actually accurate.

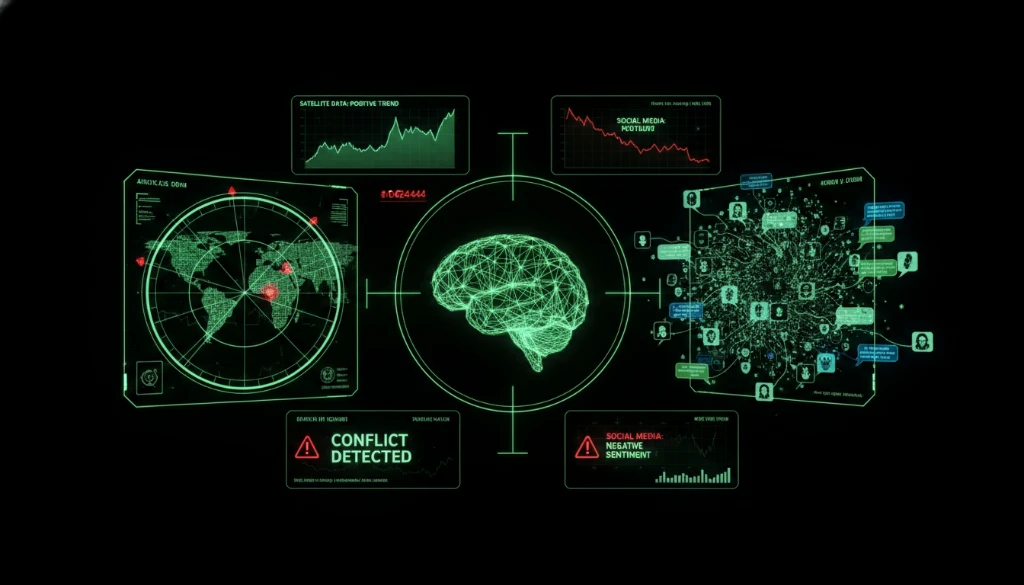

1. Conflicting Data Sources

Wars generate enormous amounts of digital content including satellite images, social media posts, intelligence reports, and propaganda. AI systems processing this information can struggle to separate verified data from manipulated content.

For example, AI-generated or manipulated images have circulated during conflicts involving Iran and other regions, creating confusion about what events actually occurred. :contentReference[oaicite:2]{index=2}

2. Speed Over Verification

Artificial intelligence excels at processing information quickly, but speed can sometimes come at the cost of accuracy. Military analysts and news platforms increasingly use AI to process satellite imagery, rank threats, and analyze intelligence signals in real time. :contentReference[oaicite:3]{index=3}

However, rapid automated analysis may amplify incorrect assumptions before human analysts have time to verify them.

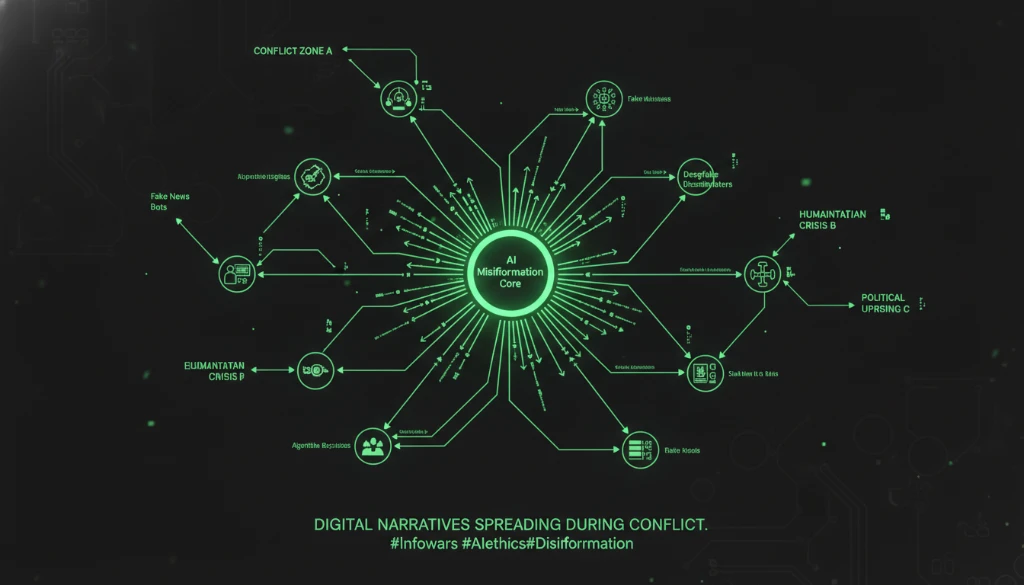

3. Algorithmic Amplification of Narratives

Digital platforms use algorithms to prioritize content that spreads quickly. During geopolitical conflicts, this can amplify dramatic narratives, misleading images, or emotionally charged misinformation.

AI-generated explanations can also appear persuasive even when the underlying information is incorrect, making misinformation more convincing to audiences. :contentReference[oaicite:4]{index=4}

4. AI-Assisted Information Warfare

Governments and political actors increasingly use AI tools to shape narratives online. Automated content generation, synthetic videos, and AI-generated social media campaigns can influence public perception during conflicts.

In some cases, networks of fake accounts or AI-generated media have been used to manipulate narratives surrounding geopolitical events. :contentReference[oaicite:5]{index=5}

5. Overreliance on AI Decision Support

Another risk arises when organizations rely too heavily on automated systems for intelligence interpretation. AI systems analyzing battlefield data may produce confident predictions that appear authoritative but are based on incomplete information.

Researchers warn that AI hallucinations can create a deceptive sense of clarity while actually increasing uncertainty in military decision-making. :contentReference[oaicite:6]{index=6}

Managing the Digital Fog of War

Reducing the impact of AI hallucinations requires strong verification processes and human oversight. Analysts must cross-check AI-generated insights against multiple data sources and treat automated outputs as recommendations rather than definitive conclusions.

Defense organizations are also investing in monitoring systems, red-team testing, and human-AI collaboration models designed to detect hallucinations before they influence decisions.

Conclusion: Intelligence Still Requires Human Judgment

Digital fog of war illustrates a paradox of modern technology. Artificial intelligence can process more information than any human analyst, yet it can also generate convincing errors. During geopolitical crises such as conflicts involving Iran, the speed and scale of digital information make this challenge even more significant.

AI will remain a powerful tool for intelligence analysis, but human judgment, verification, and critical thinking remain essential to separating reliable insight from algorithmic illusion.

Learn how DB Soft Tech develops responsible AI and data intelligence systems on our About Us page.

Ready to build secure AI-powered analytics for your organization? Contact DB Soft Tech to design trustworthy digital intelligence platforms.